The Last of Us Part 2 Remastered isn't perfect on PC but it's a million times better than Part 1

Wave goodbye to VRAM and shader compilation problems but say hello to a major CPU workout.

It's fair to say that the PC port of The Last of Us Part 1 wasn't a huge success to begin with. Original developers Naughty Dog and porting team Iron Galaxy produced a conversion that performed rather poorly and was blighted by excessive shader compilation, bugs, and memory leaks. It took a good while but eventually, most of the issues were resolved, though the clunky shader compilation stage still remains.

So when it was announced that Naughty Dog had brought in Nixxes Software to join Iron Galaxy for the PC port of The Last of Us Part 2, I was quietly confident that this conversion would be a much smoother affair, not least because Nixxes has developed some clever tools to optimise shader compilation and it has a good track record of porting Sony's classic games.

That said, Marvel's Spider-Man 2 was far from being Nixxes best work so there was always a chance that The Last of Us Part 2 (or to give it its full name, The Last of Us Part 2 Remastered…TLOU2 from now, methinks) would be just as troublesome.

Fortunately, that's not the case and I have to say that this is one of the better PlayStation ports I've tested. It's not perfect, as you'll see in due course, but for the most part, TLOU2 scales pretty well across a range of PC configurations and doesn't have any issues with VRAM usage; shader compilation is also managed very nicely.

However, the game will demand an awful lot of your CPU and system RAM, and even on maximum graphics settings, the visuals aren't quite as tip-top as they could be. Room for improvement but very good otherwise, as my old school teachers would say.

Test PC specs

- Acer Nitro V 15 (Gaming mode), Ryzen 7 7735HS, RTX 4050 Laptop (75 W), 16 GB DDR5-4800

- Core i7 9700K (65 W), 16 GB DDR4-3200, Radeon RX 5700 XT, Radeon RX 6750 XT

- Ryzen 5 5600X (65 W), 16 GB DDR4-3200, GeForce RTX 3060 Ti

- Ryzen 7 5700X3D (105 W), 32 GB DDR4-3200, GeForce RTX 4070

- Core i5 13600K (125 W), 32 GB DDR5-6400, GeForce RTX 4070

- CyberPowerPC/MSI, Ryzen 7 9800X3D (120 W), 32 GB DDR5-6400, GeForce RTX 5080

- Monitors: Acer Nitro XV282K KV / MSI MPG 321URX

- Operating System: Windows 11 24H2

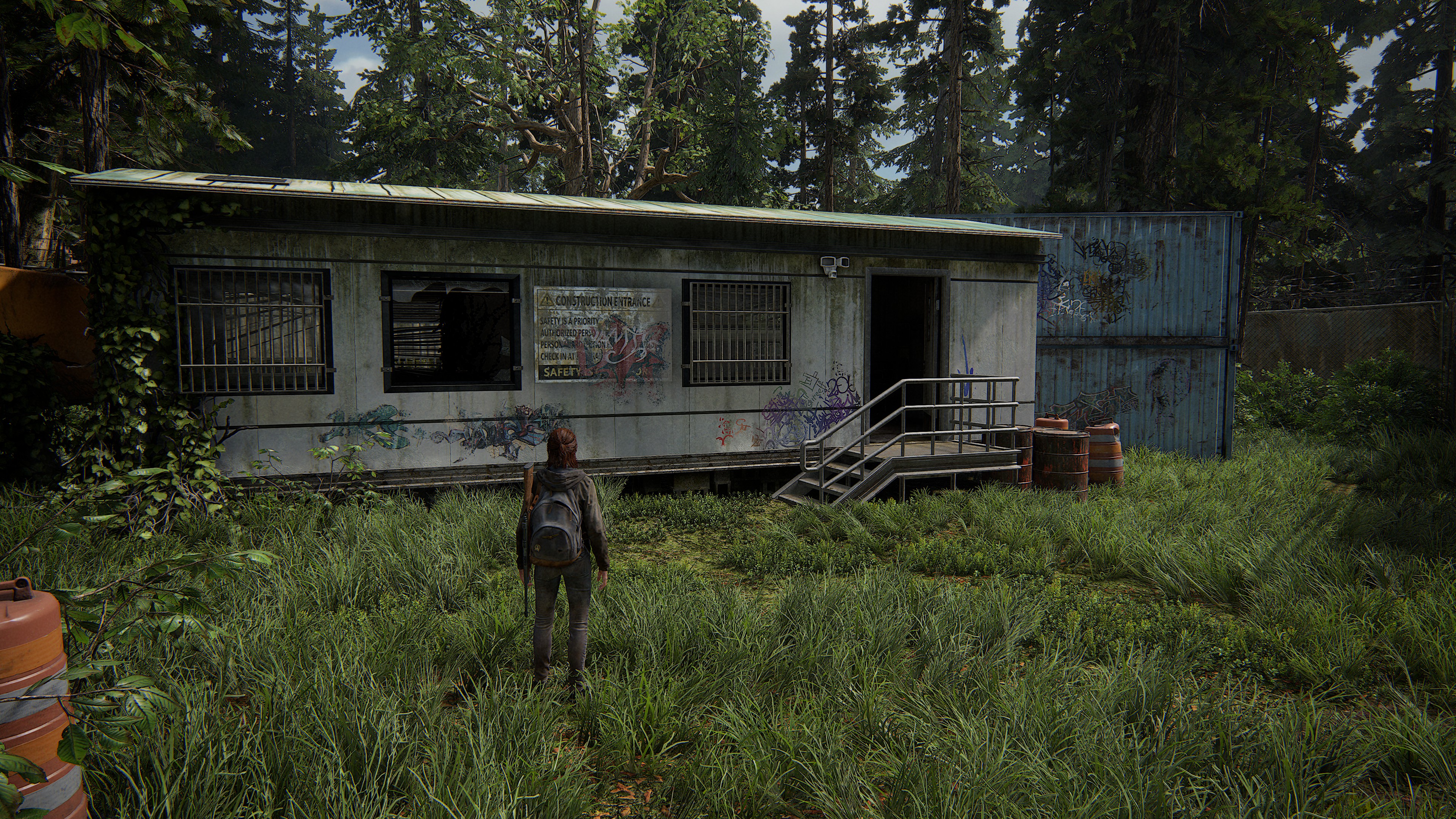

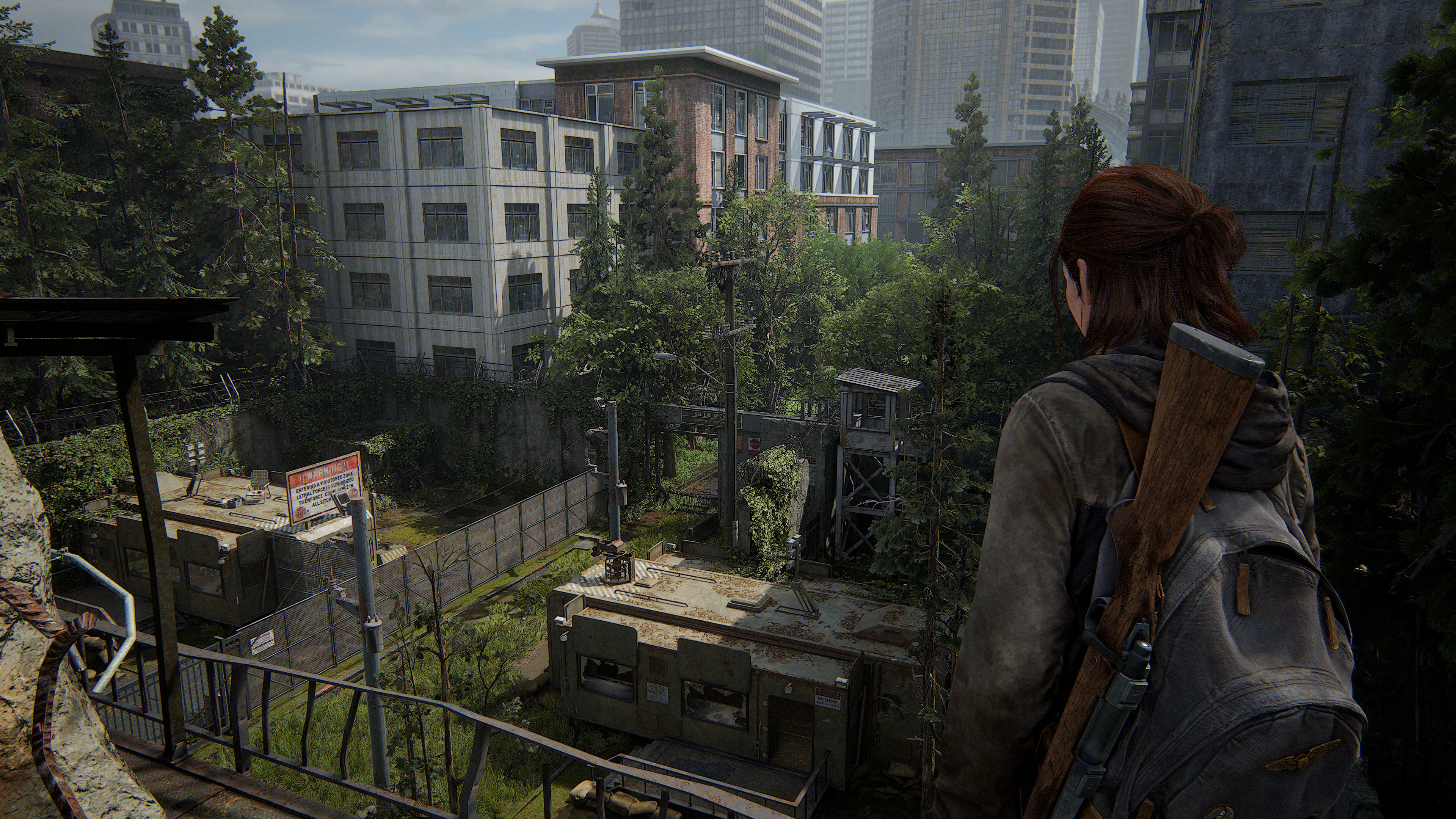

Just like its predecessor, TLOU2 is a linear action game, but this time there is significantly more scope to some of the levels. Environments range from claustrophobic building interiors to dense forests and sprawling urban areas. It's not open-world in any sense of the term, but some levels do feel very expansive.

That means there is a fair degree of performance variation so I've chosen a short run through a forest area, early in the game, to give you a rough idea as to how well TLOU2 performs. What the stage lacks in draw distance, it more than makes up for in terms of pixel processing load thanks to the detailed lighting models and heavy use of shadows. Naturally, different sections of the game run slower or faster, but this section provides a good metric.

The biggest gaming news, reviews and hardware deals

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

After all the testing and footage captures were done, TLOU2 received a huge 82 GB patch. It wasn't possible to retest every system in time but what I can say is that the performance figures you'll see below are still representative of the game as a whole. The patch has improved matters on low settings but dropped the frame rates a little at higher settings.

The main thing the patch fixed was the notable pop-in of objects, textures, and shadows that spoiled the visuals somewhat, even on the highest graphics quality settings. Please note that the videos of each preset are with the patch applied, but the performance charts are before the patch, including the upscaling videos.

Very Low quality preset

Starting with the lowest quality graphics preset, we can see that none of the test PCs struggle to run The Last of Us Part 2 at 1080p—and apart from some very obvious object and shadow pop-in, the overall looks are pretty good.

In fact, it looks suspiciously too good at times—while textures look somewhat blurry, the lighting and quality of the shadowing are very good for a 'very low' preset. However, the level of detail (LOD) transitions are quite jarring at times but you can't expect miracles at this setting.

Something that I found surprising was the VRAM usage at 1080p Very Low. In all cases, every GPU was using around 90% of its local memory (assessed using PIX on Windows) and this didn't change when I increased the resolution to 1440p and then 4K. It turns out this happens with all of the quality presets and there's only one explanation.

The Last of Us Part 2 uses an asset streaming system, just as in Part 1, and it's clear that Naughty Dog, Iron Galaxy, and Nixxes Software have done an excellent job at making this work so well. At no point does any GPU try to exceed the amount of VRAM available and as one increases the quality settings and/or resolution, the streaming buffer adjusts in size to ensure that everything remains within limits.

However, that does mean the less VRAM a GPU has access to, the more assets it will need to stream from the system memory over the PCIe bus. This is why there is such a significant difference in the 1% Low frame rates between the Ryzen 7 5700X3D and Core i5 13600K PCs—the latter has DDR5-6400 CL32 whereas the former has DDR4-3200 CL16.

The faster memory system of the former gives the 13600K an edge over the 5700X3D when the game is either very CPU-limited or when it has to stream more assets over the PCIe bus.

Low quality preset

You'd be forgiven for thinking that the above video is just a copy of the Very Low preset one but it's simply a case that each preset is only marginally better looking than the preceding one. Texture and shadow resolutions are a little better, and there is a tad more detail in the world, but overall, the Low preset isn't significantly nicer looking than the Very Low preset.

The performance hit from increasing the preset is pretty small, so if your gaming PC copes well enough at Very Low, it should have no problem dealing with the Low preset.

It's worth spending a moment to take a look at the 4K performance results for the old Core i7 9700K, Radeon RX 5700 XT test PC. This is a hardware configuration that sits very close to the minimum hardware requirements for TLOU2 so the relatively low frame rates at, say, 1440p are perfectly understandable, but the fact that the performance drops off a cliff at 4K is a little puzzling.

It's not a VRAM issue because (a) the asset streaming system prevents it in the first place and (b) the RTX 4050 laptop has less VRAM than the RX 5700 XT (6 vs 8 GB), and its frame rates don't plummet at 4K. I suspect it's down to the fact that AMD's RX 5000-series of GPUs don't support Smart Access Memory (aka Resizable BAR) but exactly why it's so bad isn't clear.

Medium quality preset

Another preset notch up the quality scale and once again, we see small improvements here and there, coupled with small performance decreases across the board. All of the test systems run TLOU2 perfectly well at 1080p or 1440p with the Medium preset.

Disappointingly, though, asset and shadow pop-in is still quite noticeable, and I had hoped that this would clear up with the Medium preset. At the very least, I expected it to only apply to distant objects but one can clearly see the LOD system working away very near to the characters.

At 4K we come to the magic '60 fps' barrier for the RTX 4070 so that means if you have such a card and you're hoping to use a higher quality preset, then you'll need to keep the resolution to no more than 1440p or use upscaling (which essentially does the same thing).

High quality preset

The High preset offers no surprises, apart from the fact that every test PC runs perfectly well at 1080p or 1440p, though the RTX 4050 laptop is beginning to struggle a little here. It's nothing that a spot of upscaling can't fix, though, as TLOU2 is rendered using lots of traditional techniques (generally grouped under the catch-all term of 'rasterization') that are all very pixel count/resolution dependent—decrease the number of pixels requiring processing and you'll pull back the performance.

My main issue with the High preset is that asset pop-in is still very noticeable. I'd prefer to sacrifice a bit of performance to have all of this a lot smoother than it currently is. It's not as distracting as it is with Medium or Low preset, and it's better than it was pre-patch, but I'd say it's still not good enough.

Very High quality preset

The Last of Us Part 2 looks its very best with the Very High preset, as one would expect, and the world and characters look fantastic with this graphics setting. At 4K, on a large OLED monitor, the environments, lighting, and shadows are top-notch.

With this preset, asset and shadow pop-in (caused by LOD transitions) all but disappear, though it is still evident if you look for it. Prior to the 82 GB patch, it was really quite bad and although some performance has been used up to solve the problem, there was plenty of spare fps to begin with.

As this is all down to the asset streaming system, there's the possibility for even more tweaks to be applied via a future patch, that will either remove the transitions altogether (at the cost of more streaming) or push them even further away from the camera, so that they're practically unnoticeable.

It's still good news with regards to the performance, as the RTX 3060 Ti and RX 6750 XT PCs cope really well at 1080p and the RTX 4070 is fine at 1440p. When it comes to 4K, that's the preserve of the RTX 5080 but given that it's so far ahead of the 4070 (almost double the frame rate), any GPU that outperforms a 4070 in traditional rendering will be fine at this resolution.

Upscaling performance

The performance testing of the quality presets shows that the use of upscaling and frame generation is only needed if one is searching for frame rates significantly above 60 fps. That said, it's worth using DLSS, FSR, or XeSS upscaling just to get better anti-aliasing. By default, TAA (temporal anti-aliasing) is used and while it's perfectly serviceable, it does make certain elements a little fuzzy or induces pixel crawling on high-contrast edges.

TLOU2 offers a decent spread of the latest upscaling and frame generation tech with DLSS 3.7, FSR 3.1 and 4.0 being fully implemented, although only the first version of XeSS is available for Intel Arc owners and no DLSS 4, either. In the case of AMD and Nvidia's systems, frame generation is decoupled from the upscaler, so one can mix and match whatever system one likes.

FSR Balanced upscaling

Asus ROG Ally, 1080p, Very Low preset, 15 W mode

There's one test platform that I didn't include in the preset charts and that's my usual Asus ROG Ally. The reason is simple: it can't hit a consistent 30 fps at Very Low, even when using its 30 W power mode.

It's a different story once you enable FSR upscaling but while the performance looks reasonably consistent in the above video, running in a 15 W mode, you can also see that it doesn't take much for it to drop below 30 fps. That's even the case when the Ally is used at full power.

The 82 GB patch certainly helped matters on the little handheld (it was a lot slower before) so there's a chance that it might be playable on a Steam Deck at launch or with a future patch.

DLSS Quality upscaling

Ryzen 7 7735HS, GeForce RTX 4050, 1080p, High preset

The RTX 4050 laptop doesn't need upscaling but the use of DLSS Quality helps lift the performance over the 60 fps barrier, generally making it a bit smoother to play when the action really heats up.

As to the use of frame generation, when enabled with DLSS Quality, you get a fair amount of shimmering across most surfaces (it's much better in the patched version but still present), especially those with grass and shadows. It disappears when you use DLSS Balanced or Performance, but at 1080p, the use of those upscaling modes induces too much blurring of fine details.

DLSS Quality upscaling + FSR frame generation

Ryzen 5 5600X, GeForce RTX 3060 Ti, 1440p, Medium preset

It's a similar story with the RTX 3060 Ti—it doesn't need upscaling or frame generation, but it's nice to experiment with them to see what kind of uplift you can get. Interestingly, the use of FSR frame gen with DLSS Quality upscaling doesn't produce the same level of shimmering that one gets with DLSS frame gen (and it's not overly noticeable in the above video due to compression) but Nvidia should have a driver ready for the launch of TLOU2, and I'll be surprised if the issue is present with that update.

FSR Balanced upscaling

Ryzen 5 5600X, Radeon RX 6750 XT, 1440p, Very High preset

Where FSR frame generation works quite well with the RTX 3060 Ti, it makes things a little too glitchy with the RX 6750 XT. There's no obvious reason for this, as they're both using the same algorithms and more importantly, the Radeon's drivers (Adrenalin 25.3.2) already support The Last of Us Part 2.

At 1440p Very High native, the RX 6750 XT struggles to hit 60 fps but with FSR Balanced upscaling, the performance crosses into the realms of consistency and the game is more enjoyable to play.

DLSS Balanced upscaling + frame generation

Ryzen 7 9800X3D, GeForce RTX 5080, 4K, Very High preset

With no ray tracing effects to grind the GPU to dust, the only reason why one would use upscaling and frame generation with an RTX 5080 is to get the best possible frame rate. You can see how well it runs with DLSS Balanced + frame gen above—DLSS Performance obviously generates the best performance but I prefer the looks of Balanced, as it stops fine details in the distance from being removed.

CPU analysis

If you've read this far, I'm sure you'll be pleased by how well The Last of Us Part 2 runs, especially if you played Part 1 in its launch state. This port is a much better affair and other than the annoying LOD transitions, TLOU2 looks and runs great.

However, during my testing, I couldn't help but notice that on every system, the CPU's cooler was working overtime. That's not unusual for a modern game but the Ryzen 7 9800X3D normally breezes through such workloads, barely rising in temperature or using lots of power.

As it turns out, The Last of Us Part 2 really works the CPU—so much so that the old Core i7 9700K was running at 100% utilization on every core, all the time. Curious as to what's going on underneath the hood, I first recorded the CPU usage with Task Manager for the 13600K and 9800X3D systems running TLOU2 at 1080p Very High.

As you can see, Intel's Raptor Lake processor is working almost all of its cores, including the E-cores, so I repeated the process for just the P-cores being active (essentially turning the 13600K into a six-core, 12-thread chip). That just loaded up the P-cores to near-constant full utilization, so just what's going on to warrant 12 to 16 threads of solid processing?

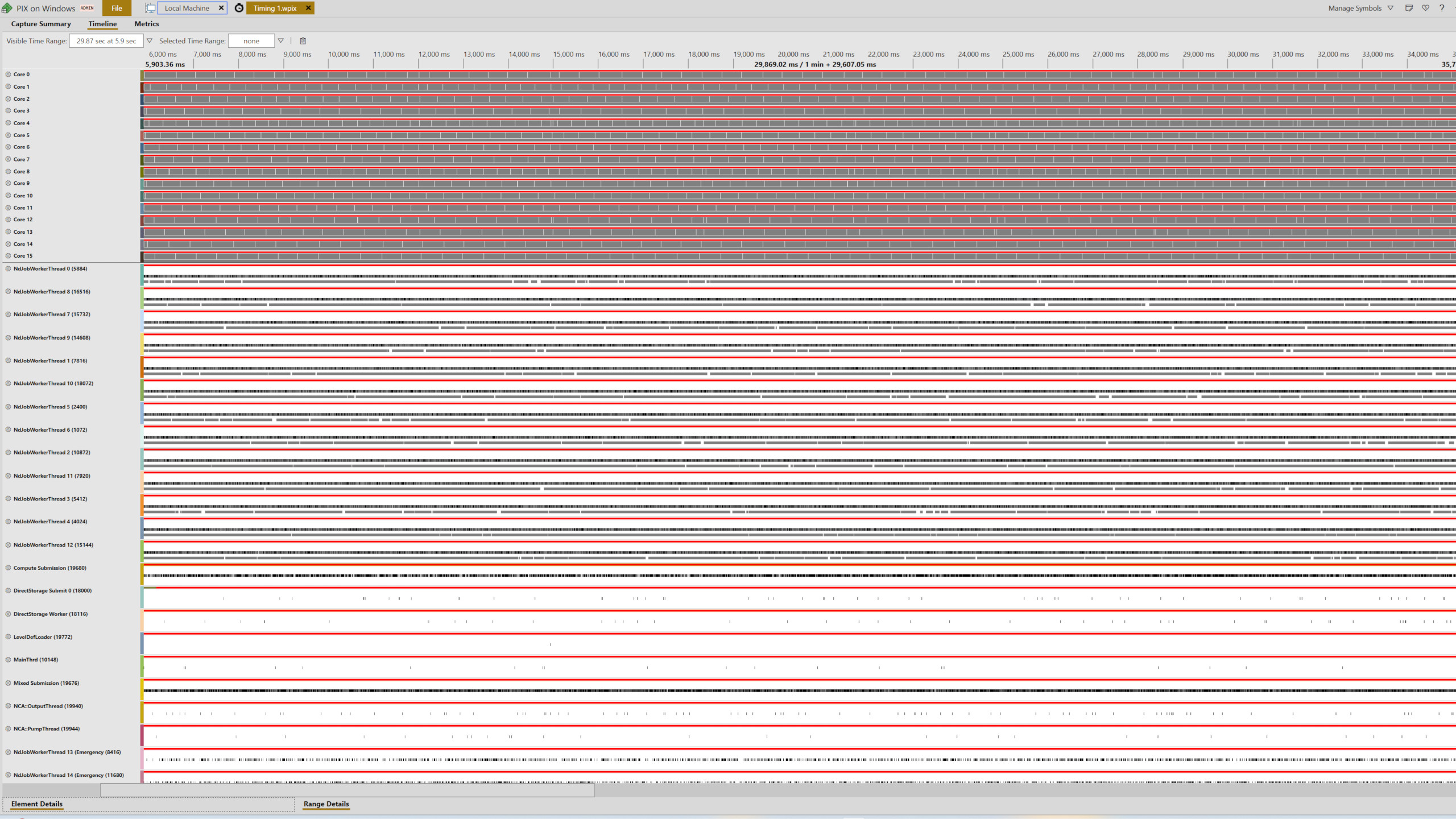

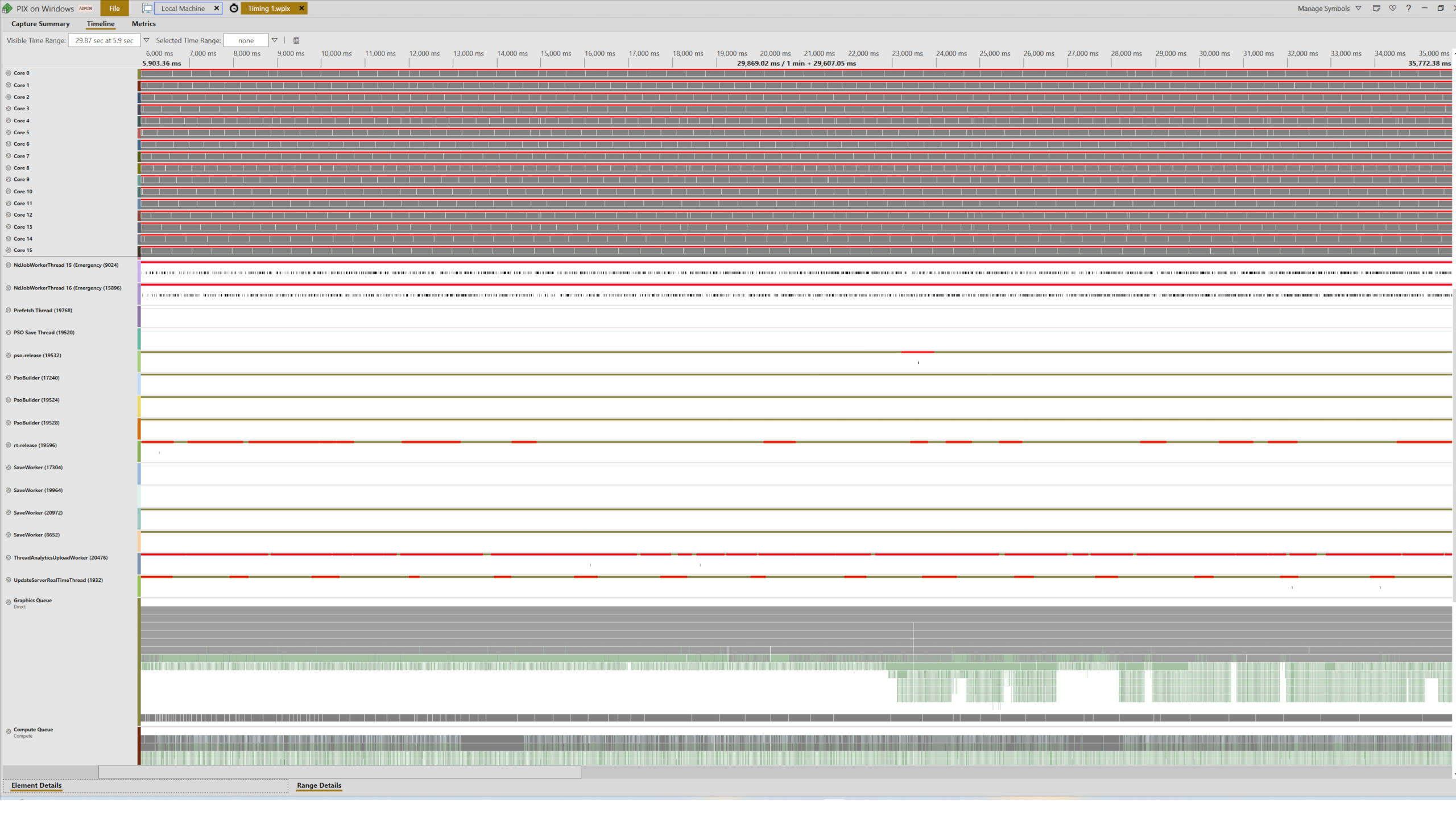

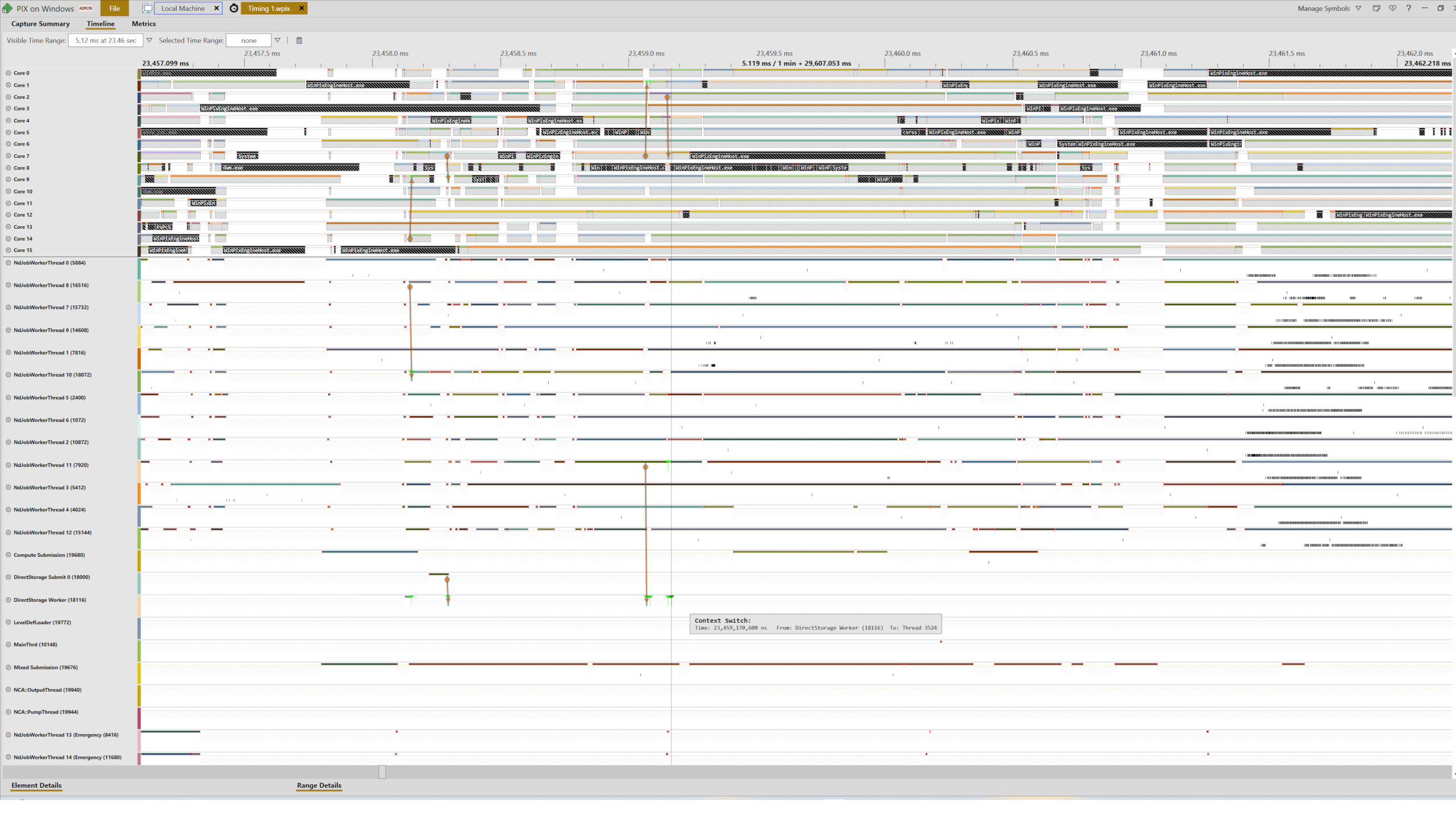

Using PIX on Windows, I captured thread distribution and API calls over a run through the forest and was greeted by the following wall of information:

Delving into a slice of this shows that TLOU2's engine generates multiple worker threads, along with threads for handling DirectStorage transactions, as well as PSO compilation (aka shader compiling). In the case of the latter, this is a system that Nixxes developed to reduce the need for a length compilation stage upon the first load of a game.

The Last of Us Part 2 still has such a stage but only for loading a save file, and even then, it's very brief. After that, it does all the compiling on an as-required basis during gameplay via dedicated threads. The worker threads are probably involved in handling the asset streaming mechanism and I've seen this in a few games before, though nothing like to the extent that TLOU2 does it.

Mindful that Intel's E-cores aren't as powerful as its P-cores, I initially thought that the performance of the 13600K system was being held back by them. So I ran the tests again but with the RTX 5080 in the Ryzen 5 5600X, Ryzen 7 5700X3D, Core i5 13600K PCs, and a Core Ultra 7 265K (Arrow Lake) setup.

As you can see, the use of the E-cores actually isn't a problem, though I suspect Intel will want to add TLOU2 to its Application Optimizer tool at some point to better handle all the relevant threads. What's most interesting to see is just how well (or rather, how poorly) the 265K fares against the 13600K—only at 1080p does it lead the older chip and that's almost certainly down to the fact that this system is using DDR5-8000 RAM.

Final thoughts

So, there you have it—a Last of Us PC port done properly. I have no doubt that Naughty Dog and Iron Galaxy learned some valuable lessons from their collaboration for Part 1, and with Nixxes Software along for the ride in Part 2, the end result is a game that looks really good and performs fine on lots of different gaming PCs.

It makes a pleasant change to have a game where upscaling and frame generation are completely optional, but that should be the case for any game that isn't using ray tracing everywhere.

I've not mentioned anything about what are the best settings to use but that's simply because you don't need to tweak them to get good performance. Just start with the High preset and then either change the resolution or apply upscaling to get the frame rate you want. Personally, I don't like the looks that the film grain and chromatic aberration options add, so I'd recommend disabling them but other than that, there's not much else worth adjusting.

That all said, it's not 100% perfect. The LOD transitions are quite distracting, even on the High preset, and the serious CPU workload might give your processor's cooling a lot more to deal with than any game has done so far. But given the tradeoff (no stuttering, no grindingly long shader compilation, no VRAM issues), these issues are acceptable.

Now, if you'll excuse me, I have a bunch of clickers that need addressing. Lock and load Ellie—it's fungus mashing time.

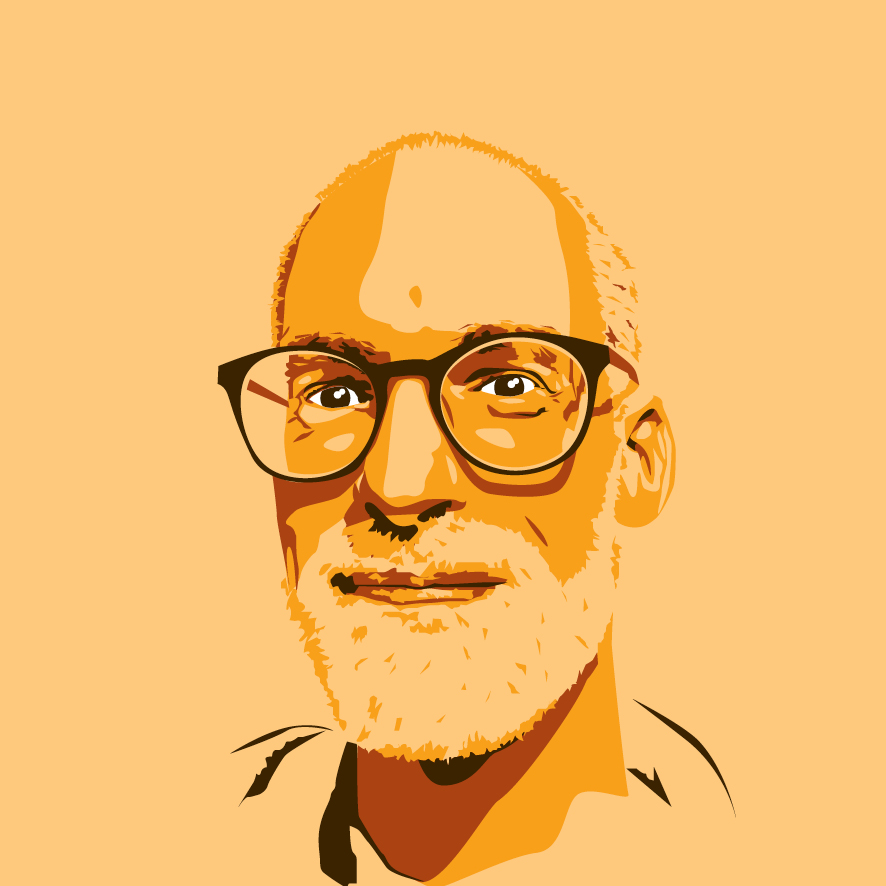

Nick, gaming, and computers all first met in 1981, with the love affair starting on a Sinclair ZX81 in kit form and a book on ZX Basic. He ended up becoming a physics and IT teacher, but by the late 1990s decided it was time to cut his teeth writing for a long defunct UK tech site. He went on to do the same at Madonion, helping to write the help files for 3DMark and PCMark. After a short stint working at Beyond3D.com, Nick joined Futuremark (MadOnion rebranded) full-time, as editor-in-chief for its gaming and hardware section, YouGamers. After the site shutdown, he became an engineering and computing lecturer for many years, but missed the writing bug. Cue four years at TechSpot.com and over 100 long articles on anything and everything. He freely admits to being far too obsessed with GPUs and open world grindy RPGs, but who isn't these days?

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.